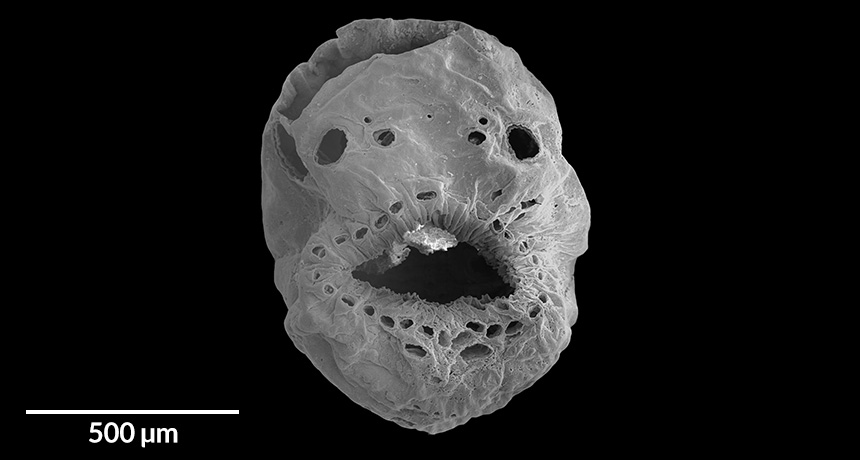

Now there are two bedbug species in the United States

Bedbugs give me nightmares. Really. I have dreamt of them crawling up my legs while I lie in bed. These are common bedbugs, Cimex lectularius, and after largely disappearing from our beds in the 1950s, they have reemerged in the last few decades to cause havoc in our homes, offices, hotels and even public transportation.

Now there’s a new nightmare. Or rather, another old one. It’s the tropical bedbug, C. hemipterus. Its presence has been confirmed in Florida, and the critters could spread to other southern states, says Brittany Campbell, a graduate student at the University of Florida in Gainesville, who led a new study that tracked down the pests.

Tropical bedbugs can be found in a geographic band of land running between 30° N latitude and 30° S. In the last 20 years or so, they’ve been collected from Tanzania, Sri Lanka, Malaysia, Australia, Rwanda and more. Back in 1938, some were collected in Florida. There were more reports of the species in the following years, but none since the 1940s.

Then, in 2015, researchers at the Insect Identification Laboratory at the University of Florida identified bedbugs sent to the lab from a home in Brevard County, Florida, as tropical bedbugs. To confirm the analysis, researchers went to the home and collected more samples. They were indeed tropical bedbugs, the team reports in the September Florida Entomologist.

The family thought that the bedbugs must have been transported unknowingly into the house by one of the people who lived there. But no one living in the home had traveled outside the state recently, let alone outside the country. This suggests that tropical bedbugs can be found elsewhere in Florida, the team concludes.

Additional evidence comes from the Florida State Collection of Arthropods, which holds two female tropical bedbugs that, according to their label, were collected in Orange County, Florida, on June 11, 1989, from bedding. “Whether this species has been present in Florida and never disappeared, or has been reintroduced and remains in small populations, is not currently known,” the researchers write.

Why hasn’t anyone noticed? Well, people don’t usually send bedbugs to entomologists when they have an infestation, and your average victim isn’t going to notice the difference between the two species. “Both species are very similar,” Campbell says. Not only do they look alike, but they also both “feed on blood, hide in cracks and crevices and have similar lifestyles.” Plus, there’s been little research directly comparing the two species, she notes, so scientists don’t know how infestations might differ.

Just to give us all a few more nightmares, Campbell points out something else: While there’s probably no reason to worry that the creepy critters will spread as climate change warms the globe, she says that there is a potential for the species to move north “because humans provide nice conditions for bedbugs to develop.”